INTRODUCTION

Visual defects cruelly obstruct the daily lives of blind people. To help those people walk carefully in complicated road conditions, it is necessary to guide them on a walkable path ( Nagarajan and Gopinath, 2023). Warning of unforeseen objects is imperative for visually impaired people (VIP) as unforeseen objects frequently seem to be difficulties that hinder the flexibility of blind people. Identifying abnormalities in surveillance videos is vital to maintain security in different applications like abandoned object detection, crime recognition, parking area monitoring, accident detection, and illegal activity detection ( Abraham et al., 2020). But, manual recognition of anomalies in surveillance videos is a labor-intensive and tedious task for individuals. Owing to the vast quantity of datasets produced by serious schemes in security applications, conducting a manual study is an unreasonable solution ( Abdul-Ameer et al., 2022). Currently, there is a rise in the demand for automatic systems to find video anomalies. Such structures are biometric detection of individuals, video-based detection of abnormal behavior, alarm-based observing of closed-circuit television scenes, and automatic recognition of traffic violations ( Abdusalomov et al., 2022). Automatic systems decrease human time and labor, making them cost-effective and more efficient to find anomalies in surveillance videos ( Mukhiddinov et al., 2022). Scholars use machine learning methods and image processing approaches and have modeled many approaches. Many common vision-related guiding assistance mechanisms cannot manage obstacle detection well, as they forecast every pixel as one of the predefined simple classes ( Bhalekar and Bedekar, 2022). The unlimited number of unexpected on-road objects makes it tough to guarantee that human-driven helpful activities occur to certify safety.

Many CV relies on works devised by focusing on processes like activity learning, data acquisition, scene learning, behavioral learning, feature extraction, etc. ( Iqbal et al., 2022). The main intention of this research is to calculate processes like anomaly prediction approaches, video processing methods, vehicle prediction and observation, activity examination, scene detection, traffic observation, human behavior learning, multi camera-relied schemes and challenges, etc. ( Busaeed et al., 2022). Anomalous forecasting is a sub-domain of behavior learning out of the visual scenes captured. The convenience of video from a public place has simulation of video analysis in addition to anomalous prediction ( Dhou et al., 2022). Likewise, anomalous estimation methods comprehend the typical behavior of the training. Any changes from normal behavior are irregular ( Choi et al., 2019). The presence of vehicles on pathways, unforeseen dispersal of people from crowd, individual faints while walking, signal evading at a traffic junction, jaywalking, and U-turns of automobiles in red signals are typical examples of anomalies.

Akilandeswari et al. (2022) introduce a denoising AE with the CNN (DAECNN) to recognize the existing position of the user. The DAECNN model exploits the DAE to rebuild the noisy image and the CNN to categorize the present location of the user. The authors in Jiang et al. (2018), inventively leverage image quality assessment to choose the images captured by the vision sensor that ensures the input quality of scene for the last detection method. First, binocular vision sensor is used for capturing their image in a fixed frequency and selecting the helpful one according to the stereo image quality calculation. Next, the images captured are transferred to cloud for additional computing. Particularly, the automated detection outcome is completed for the expected images. In this step, CNN with big data is utilized.

The authors in Al-Madani et al. (2019) explored indoor localization techniques through Bluetooth Lower Energy (BLE) beacons. The study presented the BLE beacon’s RSSI and the geometric distance in the present beacons to the fingerprint point from the architecture of fuzzy logic (FL) for evaluating the Euclidean distance for determining succeeding position. Based on the outcomes, the fingerprinting model using FL type-2 (hesitant fuzzy set) was fitting for using an indoor localization technique with BLE beacons. Dimas et al. (2021) developed a self-supervised technique with CNN which learns an obstacle detection technique and mimics it, with considerably low computation requirements for safer direction finding of VIP. The CNN input is RGB images, and its outputs are saliency maps, softly assessing the image region that corresponds to probably higher risk complications.

Cheng et al. (2021) developed new hierarchical visual locality pipeline by using wearable assistive navigating devices for VIP. The presented method includes deep descriptor networks, online sequence matching, and 2D-3D geometric authentication. Images in dissimilar approaches (infrared, depth, and RGB) are given into the Dual Desc network for generating local features and strong attentive global descriptors. The global descriptor is leveraged for retrieving the coarse candidate of query image. Jasman et al. (2022) developed an IoT-based technique that assists in detecting water puddles and obstacles. The proposed method includes an Android app and a walking stick in third-party applications. The walking stick is incorporated as an ultrasound sensor, ESP32 microcontroller, and application for mobile phones. The transmission between the smartphones and ESP32 microcontrollers is conducted by the MIT App Inventor.

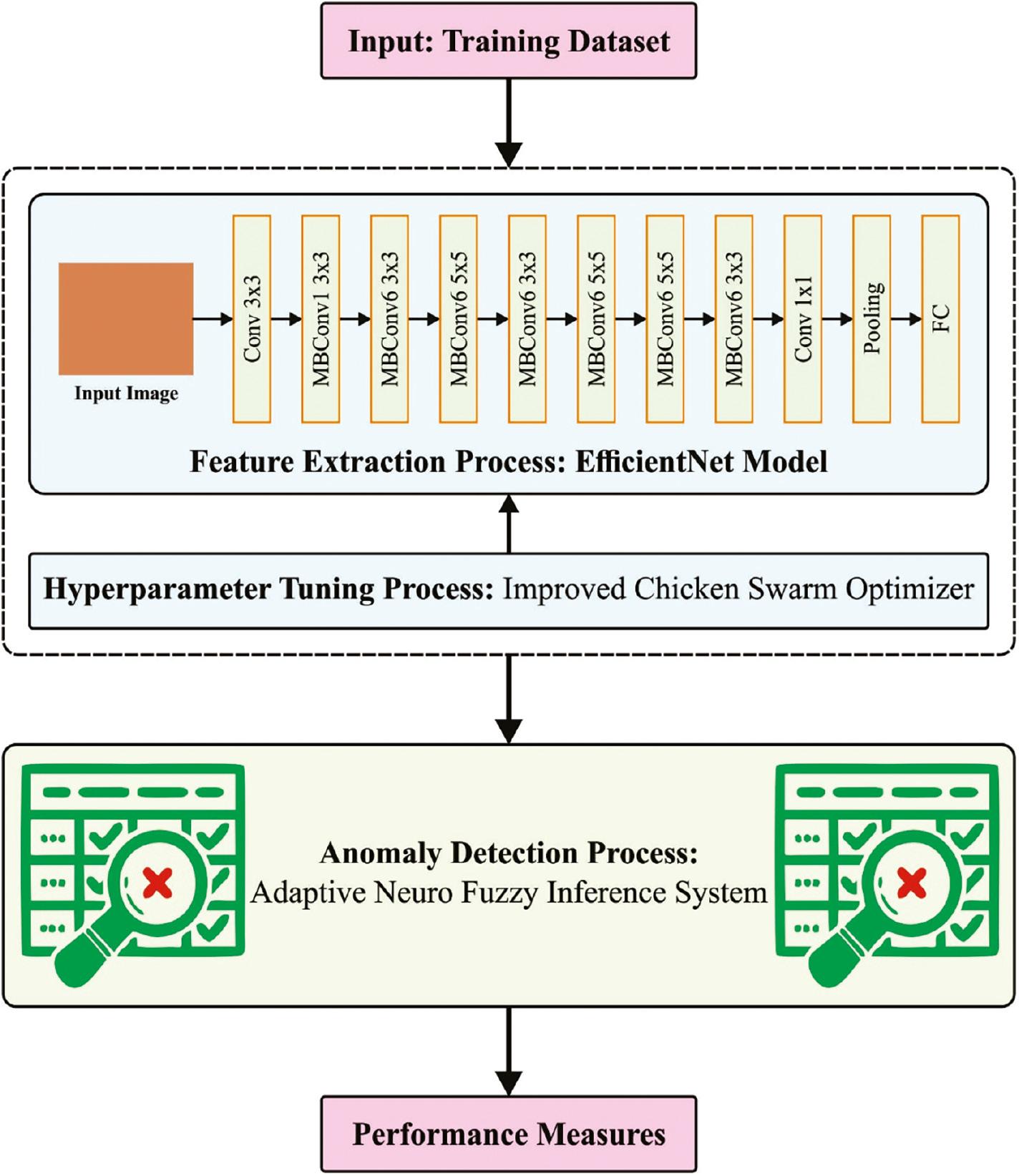

This study designs an Improved Chicken Swarm Optimizer with Vision-based Anomaly Detection (ICSO-VBAD) on surveillance videos technique for visually challenged people. The purpose of the ICSO-VBAD technique is to identify and classify the occurrence of anomalies for assisting visually challenged people. To obtain this, the ICSO-VBAD technique utilizes the EfficientNet model to produce a collection of feature vectors. In the ICSO-VBAD technique, the ICSO algorithm was exploited for the hyperparameter tuning of the EfficientNet model. For the identification and classification of anomalies, the adaptive neuro fuzzy inference system (ANFIS) model was utilized. The simulation outcome of the ICSO-VBAD algorithm was tested on benchmark datasets.

The rest of the paper is organized as follows. The next section provides the proposed model, followed by result analysis and then the conclusion of the study is provided.

THE PROPOSED MODEL

In this manuscript, we have projected an automated anomaly detection approach, named the ICSO-VBAD system for visually challenged people. The purpose of the ICSO-VBAD technique is to identify and classify the occurrence of anomalies for assisting visually challenged people. It involves several subprocesses such as EfficientNet, ICSO-based parameter tuning, and ANFIS-based anomaly detection. Figure 1 illustrates the overall flow of the ICSO-VBAD algorithm.

Overall flow of ICSO-VBAD approach. Abbreviation: ICSO-VBAD, Improved Chicken Swarm Optimizer with Vision-based Anomaly Detection.

EfficientNet model

The ICSO-VBAD technique utilizes the EfficientNet model to produce a collection of feature vectors. EfficientNet has the CNN model to accomplish remarkable outcomes on image classification tasks while maintaining performance ( Ab Wahab et al., 2021). EfficientNet exploits a compound scaling technique that uniformly scales the depth, width, and resolution of CNN to enhance its efficacy while improving or maintaining its accuracy. The model is dependent on the concept that scaling up the network dimension might result in better performance. But uniformly scaling every dimension increases computational costs and minimizes the accuracy returns. EfficientNet presents a new scaling technique which includes balancing resolution, depth, and width network. The researchers present a new compound scaling technique which uniformly scales the depth, width, and resolution network with fixed scaling coefficient. It is illustrated that this scaling technique results in improved performance than conventional techniques that independently scale this dimension. EfficientNet has accomplished outstanding performance on benchmark databases involving CIFAR10, ImageNet, and CIFAR100 but needs less computation and fewer parameters compared to other CNN models.

Parameter tuning using ICSO algorithm

In the ICSO-VBAD technique, the ICSO system was exploited for the hyperparameter tuning of the EfficientNet model. CSO is a stochastic optimizer technique that mimics the behavior of chickens and the hierarchy in the swarm ( Li et al., 2023). The fundamental concept of CSO is to categorize chickens into roosters, chicks, and hens as per their fitness values. Assume a set of rules, various forms of chickens follow dissimilar movement rules and compete with one another to search for food. CSO is a global optimizer technique.

The CSO applies the subsequent rules. There exist several groups in the chicken swarm. All the groups have some chicks and hens and a dominant rooster. Adaptation defines the status of the chickens. Many best-suited chickens would be processed as roosters, with all the roosters being the leading roosters in the group. Some of the least adapted chickens would be elected as chicks, and the remaining would be hens. The hens arbitrarily select that group to live in. The mother–child relationships, hierarchical order, and dominance within the group remain the same. This state was renewed each G generation. All the groups are differently renewed, with the hens in every group following the rooster to randomly steal food or forage for food from others; the chicks in every group correspondingly follow the mother hen to find food.

The overall amount of clusters in the population is Nop, the number of hens is N h , the number of roosters is N r , the number of mother hens is N m , and the number of chicks is N h . Rooster dominates the foraging procedure and forages through a large space.

where ε denotes the smaller constant presented to avoid the denominator being inappropriate; k is another individual from the rooster population unlike the existing individual i, Randn (0, σ 2) denotes the Gaussian distribution with mean of 0 and variance σ 2, and k ∈ [1, N r ], k ≠ i, f k is its fitness.

The hen place can be updated by the following expression:

where Rand refers to an arbitrary value in zero and one, r 1 denotes the rooster equivalent to hen; i, r 2 shows the individual unlike r 1 selected randomly in rooster and hen populations.

The chick position can be updated using Eq. (6):

where FL represents the coefficient of chick subsequent to the mother hen, whose value ranges from 0 to 2, and m indicates the mother hen of chick i. The rooster occupies the dominant location from the entire population that guides the chicks and hens near the optimum solutions. The upgraded formula in the typical CSO technique can best be suited to maintain the population diversity; however, it results in weaker local solution capability and lower solution accuracy. A reasonable X-best bootstrap model has the benefit of enhancing the accuracy solution but its unreasonable usage would create individuals from the population dependent excessively on X-best individual that sequentially results in increasing the probability of getting trapped into local optimum solution and a reduction in population diversity. Many researchers have developed the global optimal location into the rooster update location to resolve these problems.

This study presents a rooster update model dependent upon the parallel approach including Levy flight and X-best guidance to resolve the worse result, prematurity, and accuracy caused by the overview of the X-best bootstrap process.

First, most individual and global x gbest bootstraps are proposed in the rooster updating formula and, to evade the overdependence on x best which causes this method to get trapped in a local optima, the adjustment coefficient ω is proposed before the x best term.

The fitness choice is a fundamental aspect of the ICSO algorithm. An encoding result was used to develop the goodness of candidate performances. At present, the accuracy value is a major condition exploited for scheming a fitness function.

where TP stands for the true-positive value and FP represents the false-positive value.

ANFIS-based anomaly detection

For the identification and classification of anomalies, the ANFIS model is used. The ANFIS is a robust modeling device that concurrently exploits the inference properties of FL and the learning abilities of ANN ( Alibak et al., 2022). A Takagi-Sugeno system with five interrelated consecutive layers (normalization, interference, fuzzification, target computation, and interpolation) and the subsequent fuzzy if-then rules, as Eqs. (10) and (11), are built to model these problems.

Now, A 1, A 2, A 3 and B 2 represent the premise parameter. At the same time, the adjustable consequence parameter is represented as C 1, C 2, D 1, D 2, E 1, and E 2. Figure 2 depicts the infrastructure of ANFIS.

The first layer allocates a membership function (η) to all the nodes

j and evaluates the output signal

(O1j)

The resultant of the second layer

(O2j)

The resultant of the third layer

(O3j)

Next,

Eq. (16) evaluates the output of the fourth layer

(O4j).

Lastly, the ANFIS prediction for the target ( O ANFIS ) is accomplished using the following expression:

RESULT ANALYSIS

The proposed model is simulated using the Python tool. The results of the ICSO-VBAD methodology on UCSD anomaly detection database ( http://www.svcl.ucsd.edu/projects/anomaly/dataset.htm) are studied here.

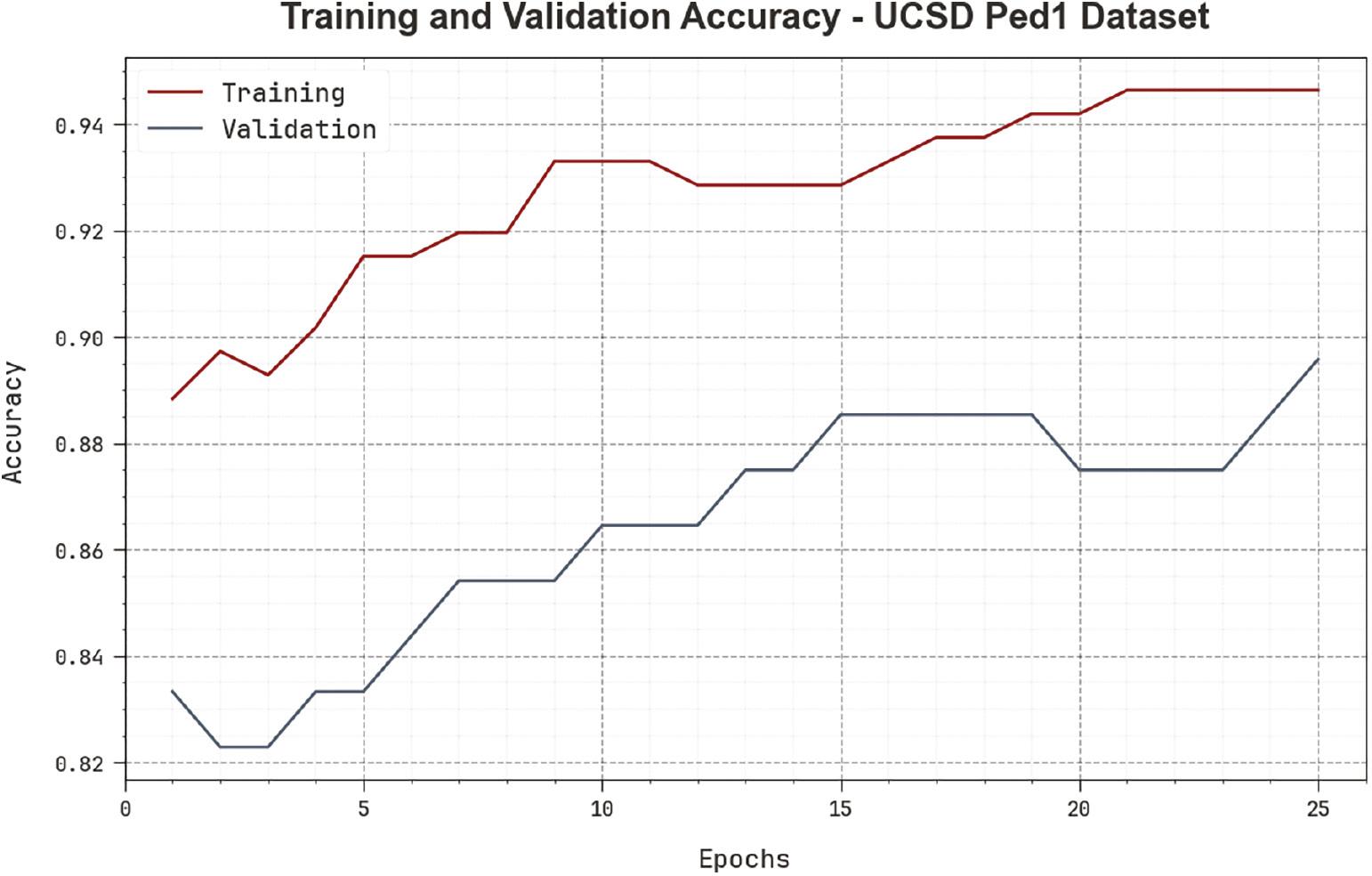

Figure 3 inspects the accuracy of the ICSO-VBAD approach in the training and validation method on the UCSDPed1 database. The result notifies that the ICSO-VBAD approach obtains higher accuracy values over maximum epochs. Additionally, the enhancing validation accuracy over training accuracy outperforms that the ICSO-VBAD algorithm learns capably on the UCSDPed1 database.

Accuracy curve of ICSO-VBAD approach on UCSD Ped1 dataset. Abbreviation: ICSO-VBAD, Improved Chicken Swarm Optimizer with Vision-based Anomaly Detection.

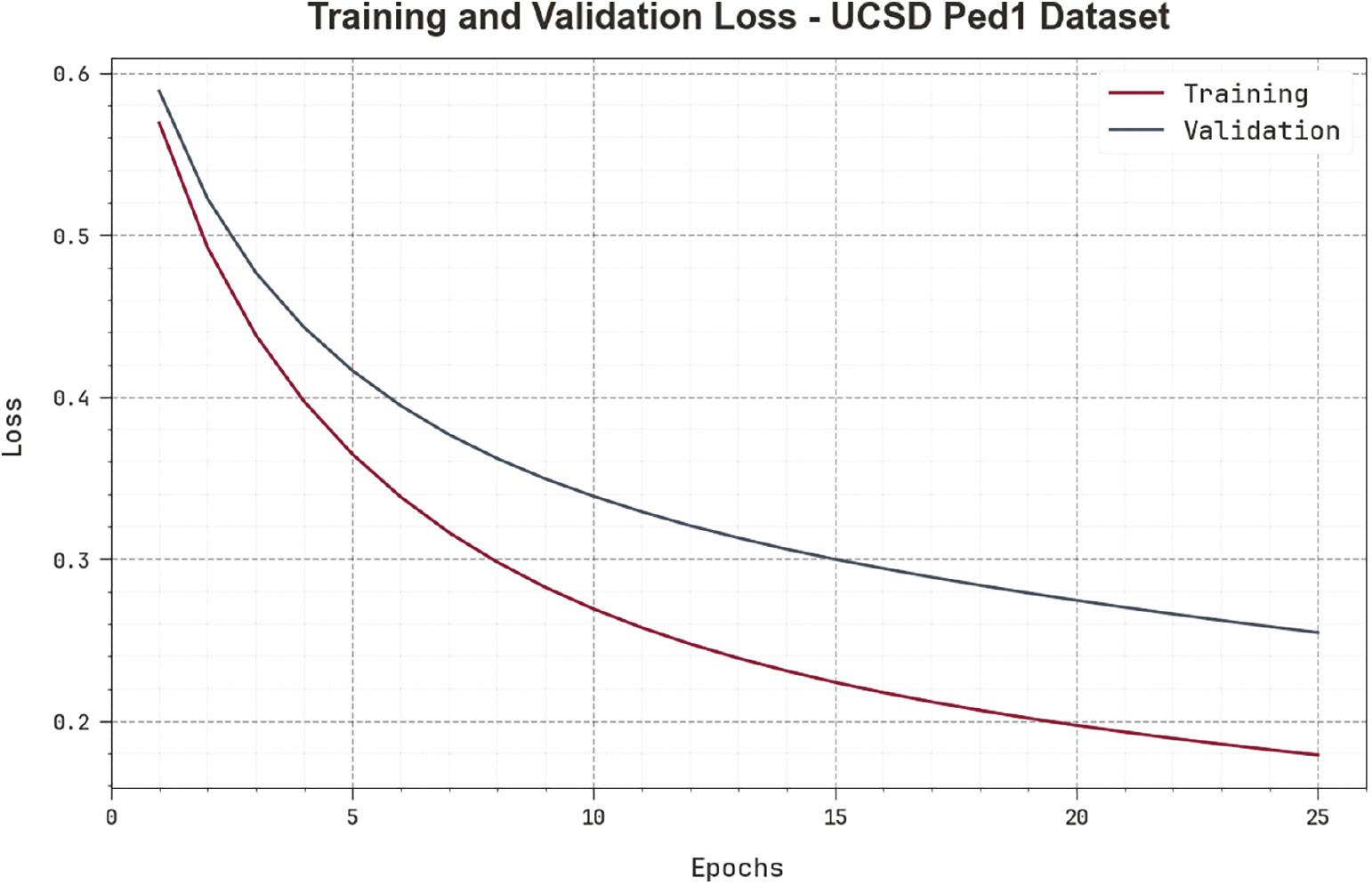

The loss examination of the ICSO-VBAD approach at the time of training and validation is illustrated in the UCSDPed1 database in Figure 4. The outcome inferred that the ICSO-VBAD approach gains adjacent values of training and validation loss. It can be clear that the ICSO-VBAD algorithm learns effectively on the UCSDPed1 database.

Loss curve of ICSO-VBAD approach on UCSD Ped1 dataset. Abbreviation: ICSO-VBAD, Improved Chicken Swarm Optimizer with Vision-based Anomaly Detection.

The results of the ICSO-VBAD technique with recent approaches on the UCSDPed1 dataset in terms of CT are given in Table 1. The results indicate that the ICSO-VBAD technique reaches a CT of 1.50 ms. On the other hand, the ACVD-SFN, AND-CS, ST-CNN ADLCS, DA-FCNN FADCS, and GPR-VAD and LHFR models offer higher CT values of 3.17, 3.33, 53.33, 26.67, and 3 ms, respectively.

CT outcome of the ICSO-VBAD approach with other algorithms on UCSDPed1 dataset.

| UCSD Ped1 dataset | |

|---|---|

| Methods | Computational time (ms) |

| ACVD-SFN | 03.17 |

| AND-CS | 03.33 |

| ST-CNN ADLCS | 53.33 |

| DA-FCNN FADCS | 26.67 |

| GPR-VAD and LHFR | 03.00 |

| ICSO-VBAD | 01.50 |

Abbreviation: ICSO-VBAD, Improved Chicken Swarm Optimizer with Vision-based Anomaly Detection.

The results of the ICSO-VBAD system with recent approaches on the UCSDPed1 dataset in terms of AUC and EER are provided in Table 2. In terms of AUC, the ICSO-VBAD technique reaches a superior AUC of 87.87% while the ACVD-SFN, AND-CS, ST-CNN ADLCS, DA-FCNN FADCS, and GPR-VAD and LHFR approaches provide minimal AUC values of 67.50, 81.80, 85, 84.43, and 75%, correspondingly. Moreover, with respect to EER, the ICSO-VBAD system attains a lesser EER of 16.05% whereas the ACVD-SFN, AND-CS, ST-CNN ADLCS, DA-FCNN FADCS, and GPR-VAD and LHFR approaches offer higher EER values of 31, 25, 24, 23.01, and 31%, correspondingly.

AUC and EER outcome of the ICSO-VBAD approach with other algorithms on UCSDPed1 dataset.

| UCSD Ped1 dataset | ||

|---|---|---|

| Methods | AUC (%) | EER (%) |

| ACVD-SFN | 67.50 | 31.00 |

| AND-CS | 81.80 | 25.00 |

| ST-CNN ADLCS | 85.00 | 24.00 |

| DA-FCNN FADCS | 84.43 | 23.01 |

| GPR-VAD and LHFR | 75.00 | 31.00 |

| ICSO-VBAD | 87.87 | 16.05 |

Abbreviation: ICSO-VBAD, Improved Chicken Swarm Optimizer with Vision-based Anomaly Detection.

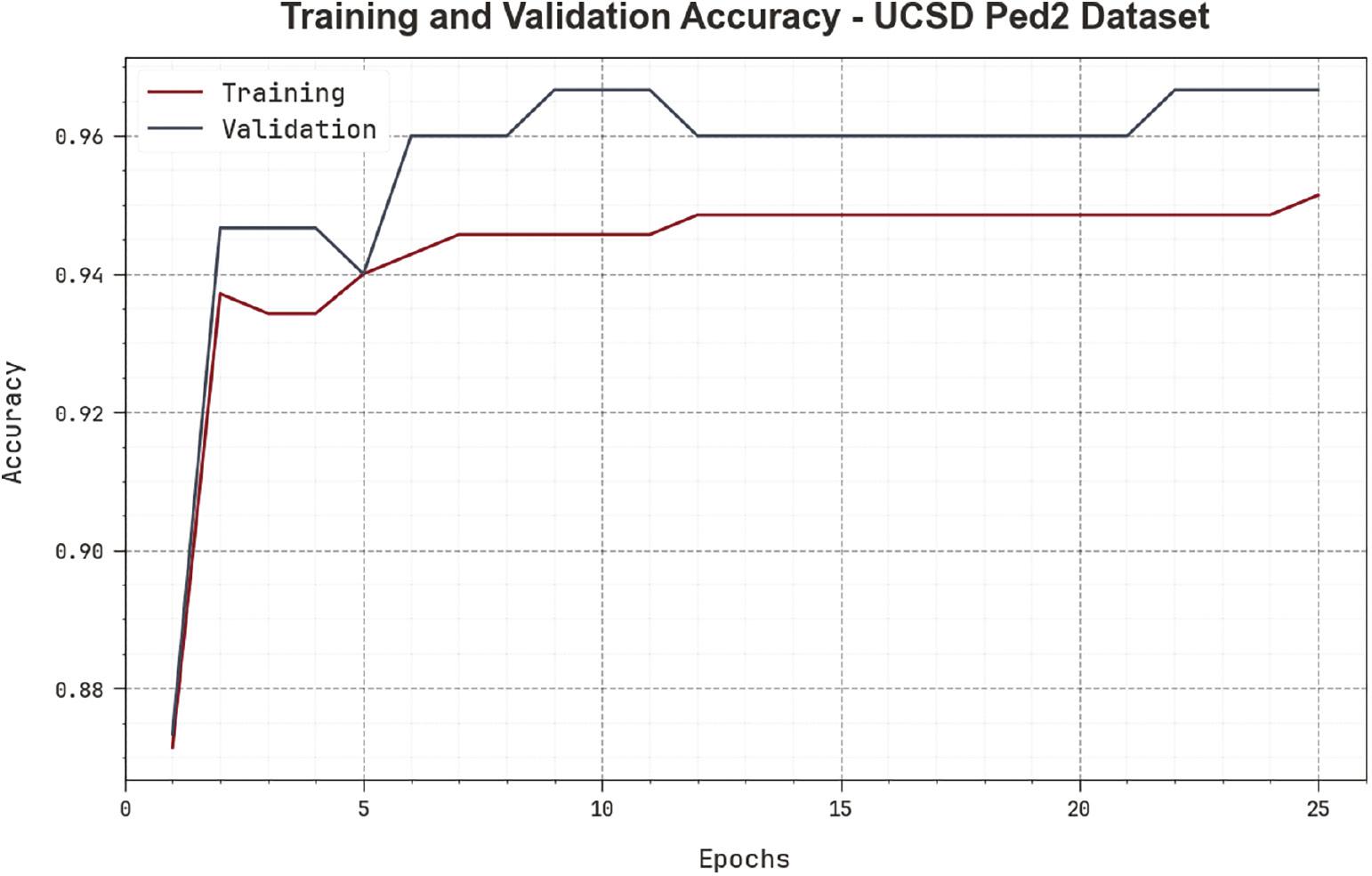

Figure 5 scrutinizes the accuracy of the ICSO-VBAD approach in the training and validation procedure on the UCSDPed2 database. The result implied that the ICSO-VBAD method achieves superior accuracy values over enhancing epochs. Also, the maximum validation accuracy over training accuracy displays that the ICSO-VBAD algorithm learns effectually on the UCSDPed2 database.

Accuracy curve of ICSO-VBAD approach on UCSD Ped2 dataset. Abbreviation: ICSO-VBAD, Improved Chicken Swarm Optimizer with Vision-based Anomaly Detection.

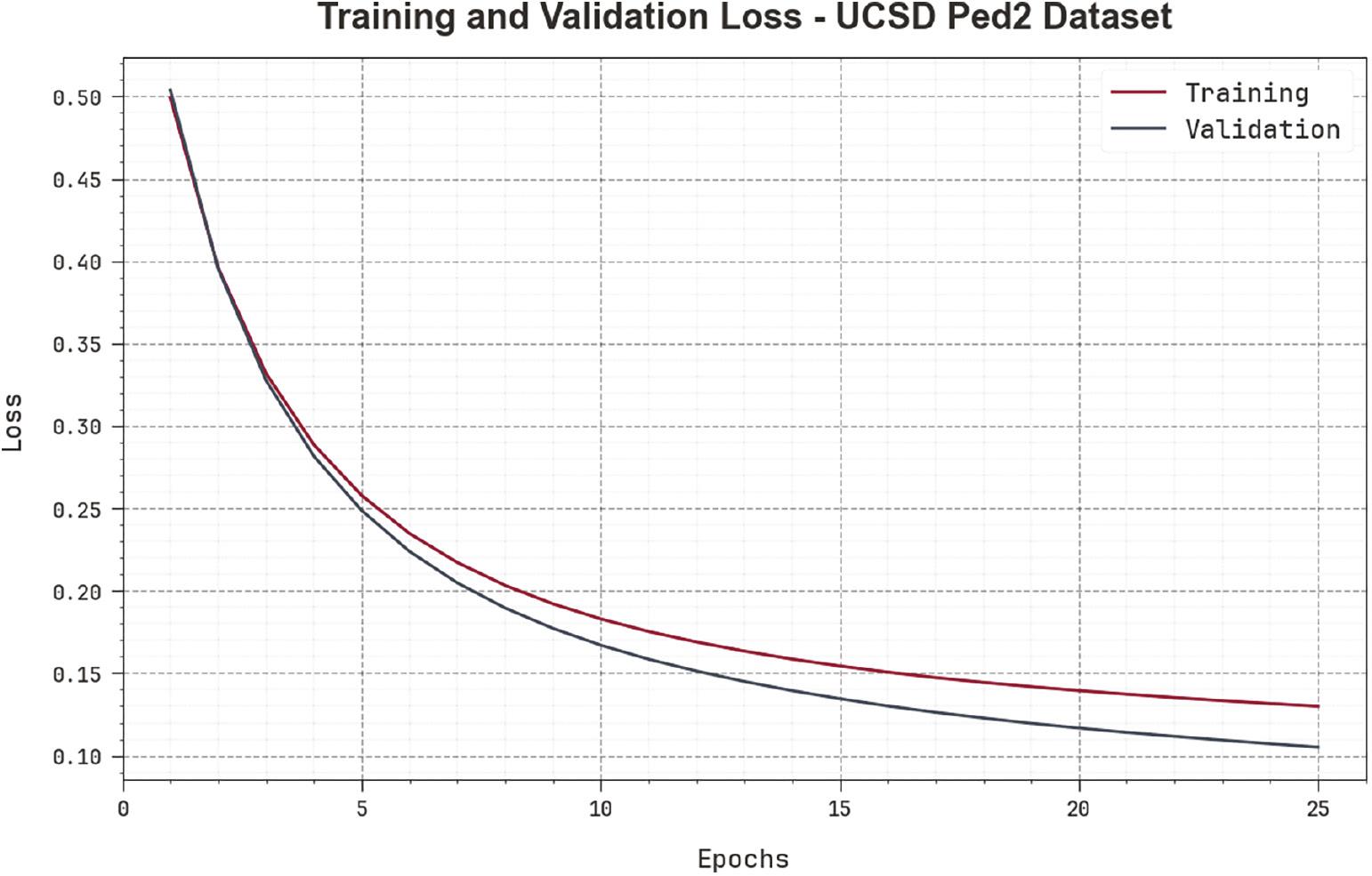

The loss curve of the ICSO-VBAD system at the time of training and validation is exposed on the UCSDPed2 database in Figure 6. The result indicates that the ICSO-VBAD approach gains near values of training and validation loss. It can be obvious that the ICSO-VBAD system learns capably on the UCSDPed2 database.

Loss curve of ICSO-VBAD approach on UCSD Ped2 dataset. Abbreviation: ICSO-VBAD, Improved Chicken Swarm Optimizer with Vision-based Anomaly Detection.

The results of the ICSO-VBAD approach with existing methods on the UCSDPed2 dataset with respect to CT are shown in Table 3. The result stated that the ICSO-VBAD technique reaches a CT of 0.18 ms. Also, the UACAD-SSS, AND-CS, ACBD-SFM, AED 150FPS-MATLAB, and ADCS-MEM approaches offer maximum CT values of 3.33, 2.67, 3.17, 1.10, and 3 ms, correspondingly.

CT outcome of the ICSO-VBAD approach with other algorithms on UCSDPed2 dataset.

| UCSD Ped2 dataset | |

|---|---|

| Methods | Computational time (ms) |

| UACAD-SSS | 3.33 |

| AND-CS | 2.67 |

| ACBD-SFM | 3.17 |

| AED 150FPS-MATLAB | 1.10 |

| ADCS-MEM | 3.00 |

| ICSO-VBAD | 0.18 |

Abbreviation: ICSO-VBAD, Improved Chicken Swarm Optimizer with Vision-based Anomaly Detection.

The outcomes of the ICSO-VBAD approach with other systems on the UCSDPed2 dataset in terms of AUC and EER are shown in Table 4. With respect to AUC, the ICSO-VBAD method attains a superior AUC of 88.90% whereas the UACAD-SSS, AND-CS, ACBD-SFM, AED 150FPS-MATLAB, and ADCS-MEM algorithms give decreased AUC values of 69.04, 82.90, 55.60, 85.54, and 81%, correspondingly. Furthermore, based on EER, the ICSO-VBAD technique reaches a lower EER of 15.02% while the UACAD-SSS, AND-CS, ACBD-SFM, AED 150FPS-MATLAB, and ADCS-MEM systems provide superior EER values of 25, 25, 42, 22.30, and 22%, correspondingly.

AUC and EER outcome of the ICSO-VBAD approach with other algorithms on UCSDPed2 dataset.

| UCSD Ped2 dataset | ||

|---|---|---|

| Methods | AUC (%) | EER (%) |

| UACAD-SSS | 69.04 | 25.00 |

| AND-CS | 82.90 | 25.00 |

| ACBD-SFM | 55.60 | 42.00 |

| AED 150FPS-MATLAB | 85.54 | 22.30 |

| ADCS-MEM | 81.00 | 22.00 |

| ICSO-VBAD | 88.90 | 15.02 |

Abbreviation: ICSO-VBAD, Improved Chicken Swarm Optimizer with Vision-based Anomaly Detection.

CONCLUSION

In this manuscript, we have projected an automated anomaly detection system, named the ICSO-VBAD approach, for visually challenged people. The purpose of the ICSO-VBAD technique is to identify and classify the occurrence of anomalies for assisting visually challenged people. To obtain this, the ICSO-VBAD technique utilizes the EfficientNet model to produce a collection of feature vectors. In the ICSO-VBAD technique, the ICSO algorithm was exploited for the hyperparameter tuning of the EfficientNet model. For the identification and classification of anomalies, the ANFIS model was utilized. The simulation outcome of the ICSO-VBAD algorithm was tested on benchmark datasets and the results pointed out the advantages of the ICSO-VBAD technique compared to recent approaches in terms of different measures. In future, the performance of the proposed model can be improved using hybrid deep learning classifiers.